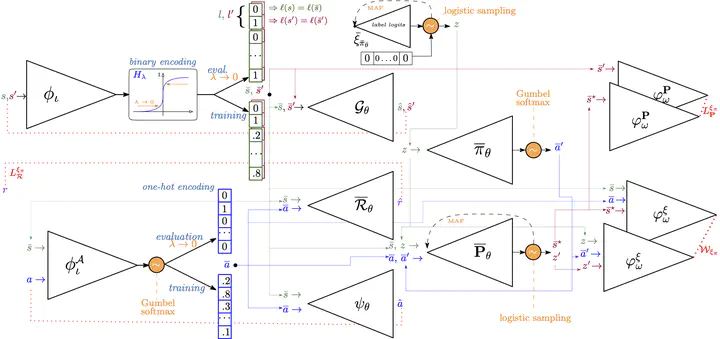

Official implementation of the Wasserstein Auto-encoded MDP (WAE-MDP) framework. WAE-MDPs enable the distilation of (any kind of) reinforcement learning policies into simpler controllers, paired with a discrete, tractable model of the environment (a latent space model). The two are provided with bisimulation guarantees, which allow formally verifying the behaviors of the agent operating under the simplified policy. The source code provided allows for replicating the experiments of the paper Wasserstein Auto-encoded MDPs: Formal Verification of Efficiently Distilled RL Policies with Many-sided Guarantees.